Oh, for the good ol’ days of political opinion polling — how innocent and straightforward it used to be. Even in the past five years it has changed beyond recognition.

Back then, it was still relatively straightforward: survey a group of people, usually between 1,000 and 2,000, ‘weight’ the answers to make them more representative of the whole UK population, and interpret the results. The methodological arguments that raged back then among the pointy-heads — telephone polls vs online polls; how to deal with past vote recall and turnout — have either now been settled or are at best secondary issues.

Polling companies were still, only half a decade ago, headed by larger-than-life public figures, more likely to have had a journalistic background than a statistical one, who regularly went on TV to “read the runes” for a grateful nation. For them, polling, like politics, was an art not a science.

Today, the question everyone asks during an election is still the same one — who is going to win? — but the world of opinion polling looks very different. We have, of course, all been burned by a succession of difficult elections with unexpected results that various polling companies failed to predict and as a result the media and public are rightly much more circumspect. We want to know more, before believing anyone’s predictions.

‘Data scientists’ are gradually replacing those grand ‘pollsters’ who used to offer confident insights with a neat turn of phrase. These new number nerds are likely to be under 30 and might well know nothing about politics. Instead of simple surveys and uniform national swings, they are using complex statistical models. For people whose bread and butter relies on being seen as a savvy political ‘expert’, all this amounts to an existential threat.

The most powerful data modelling technique in politics at the moment, is something called MRP. It stands for “multilevel regression with post-stratification” — not exactly catchy — and the number of people who fully understand it in the UK can be counted on two hands.

But, roughly speaking, you conduct a huge survey, normally 10,000 or more so that you have sufficient numbers to look at small subsets of people, and you then analyse what the most predictive characteristics are behind the question you’re interested in, for example whether race, education or income is more predictive of how people are going to vote (that’s the ‘multilevel regression’ part).

Then, once you have identified the most predictive characteristics, you use what you know about the people in each constituency, and in combination with any local effects you observe in your sample, you can estimate the outcome in every constituency in the land (that’s the ‘post stratification’).

With this method, instead of crude national swings, you create something close to a simulated version of the whole electorate, in miniature, in all its complex glory.

The defining moment for MRP arrived during the general election of 2017. The conventional opinion polls had showed a dramatic narrowing, with most still showing Theresa May’s Conservatives significantly ahead on election day. The Westminster bubble was expecting her to build on, not lose, David Cameron’s majority.

But as well as their conventional poll, YouGov had also run an MRP model, and, instead of a majority this one pointed to something very different: a hung parliament, with the Tories losing ‘safe’ seats such as Kensington, Canterbury and Ipswich. Two weeks before polling day they gave it to the Times, who put it on the front page with the headline: Shock Poll Predicts Tory Losses.

Almost nobody believed it, but it turned out to be astonishingly accurate.

In the polling world, the pattern of the turbulent past few elections has been one of repeatedly trying to correct for the mistakes of the previous election (and sometimes overcorrecting in the process). Because YouGov’s model turned out to be so accurate, right down to Canterbury and Kensington, this time everybody is waiting for the YouGov MRP to drop. It is expected next week, and if it shows a large Tory majority, I would expect the pound to surge against the dollar within seconds.

At UnHerd, though, we’re interested in the things everyone else is ignoring, and so we’ll leave to YouGov the unenviable task of forecasting the main result.

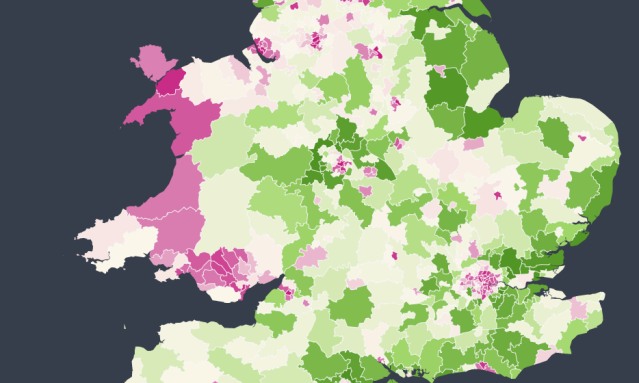

Instead, we’re doing something slightly different. We’ve teamed up with an impressive startup called Focaldata that specialises in MRP — and we’re doing what we believe is a first. Using the same technique that predicted the result last time, we’re mapping how the 632 constituencies of Britain differ on six big issues of the moment that aren’t being much talked about in the election campaign: attitudes to the Royal Family, gender, immigration, religion, free speech and tax. We’ve called it UnHerd Britain.

The first set of results, out today, reveal how different parts of the country feel about the Royal Family. The results are striking. Prince Andrew may be all over the news, and the new season of The Crown may be keeping people busy across the country, but nobody gets to vote on the Royal Family.

For the first time, today, we can see what would happen if they did — down to individual constituencies. For Buckingham Palace, it’s a mixed bag of news. Overall, their position is still secure: only 2 of 632 constituencies have more people disagreeing than agreeing with the phrase, “I am a strong supporter of the continued reign of the Royal Family.”

But all is not rosy. Scotland, while still tilting towards support of the royals in all 59 seats, is dramatically less in favour than the rest of the UK; the large cities, including London, are similarly much less enthusiastic. The groups that are growing fastest in our society — university graduates and ethnic minorities — are the groups least excited about the Royals.

It may sometimes feel like Brexit is the cause of all our divisions, but as UnHerd Britain will reveal in full Technicolor over the next few weeks, the Royal Family reign over a deeply divided kingdom across many more issues than that.

Join the discussion

Join like minded readers that support our journalism by becoming a paid subscriber

To join the discussion in the comments, become a paid subscriber.

Join like minded readers that support our journalism, read unlimited articles and enjoy other subscriber-only benefits.

Subscribe