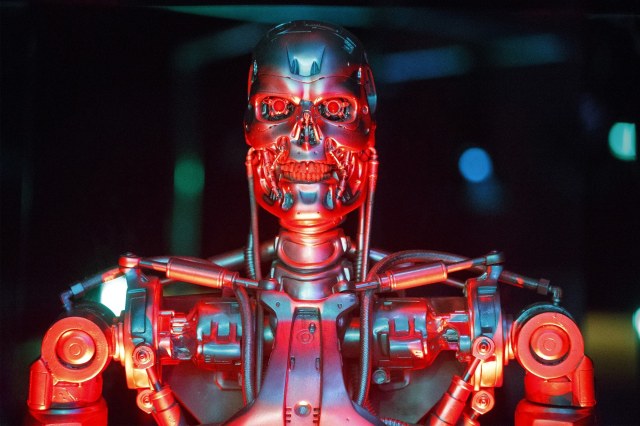

One of the more fierce Terminators Credit: Tolga Akmen/Anadolu Agency/Getty Images

Existential threats to the human race include asteroids, supervolcanoes and nuclear war.

Artificial intelligence might put paid to us too. But how? Until recently, there were two basic scenarios: one in which a super-smart computer decides the world is better off without humans – and turns against us, Terminator style; the other in which a powerful, but essentially mindless, AI system follows some poorly thought-out instructions to the letter – heedless of unforeseen and calamitous side-effects. This is the Magician’s Apprentice scenario, which Tom Chivers considers here.

Now, thanks to a piece of timely research by researchers at the University of Massachusetts, Amherst, a third scenario presents itself – AI will kill us through global warming, because the neural networks used to train AI models use so much energy.

The findings are the subject of an MIT Technology Review article by Karen Hao:

“In a new paper, researchers at the University of Massachusetts, Amherst, performed a life cycle assessment for training several common large AI models. They found that the process can emit more than 626,000 pounds of carbon dioxide equivalent—nearly five times the lifetime emissions of the average American car (and that includes manufacture of the car itself).”

By way of further comparison, the average human being generates 11,023 pounds of CO2 in the course of a year, and the average American human being 36,156 pounds.

Machine learning is so energy hungry because it works through what Hao describes as “exhaustive trial and error.” This requires the application of colossal quantities of brute processing power – and therefore lots and lots of electricity.

We tend to think of digital technologies as belonging to the clean, green, ‘dematerialised’ economy of the future – completely different from the smoke-belching heavy industries of the past.

But cyberspace is an energy-hog too. For instance, it’s estimated that the data-processing tasks required to make Bitcoin work consumed 30 terawatt hours of electricity in 2017 – about the same as the whole of the Republic of Ireland. Meanwhile the global banking system consumes around 100 terawatt hours.

How much could AI add to the load? The comparison that the researchers make to the emissions of a car is alarming, but of course there are many more cars in the world (more than a billion) than neural networks. The question therefore is whether we can foresee a future in which artificially intelligent, machine learning systems are so numerous that they make a significant difference to the world’s carbon footprint.

Here we need to distinguish between the neural networks on which AI models are trained and the software products derived from that training. For instance the digital assistants that can (sort of) understand what you say to them are not using vast amounts of computer processing power to do their jobs, most of the hard work (of figuring out how to process human speech) has already been done in some lab somewhere, on an immensely more powerful (and energy-hungry) computer than what’s in your phone.

However, these assistants are still very limited in their capabilities. Fast forward a few decades and the world might be full of super-sophisticated AI agents, that either replace or greatly enhance the work that people do. This would be an economy, indeed a civilisation, in which machine learning is ubiquitous and continuous.

While we spend a lot of time thinking about the impact of all that artificial intelligence on human society, we also need to think about the natural resources required to turn the global economy into a planet-sized learning machine. Of course, the environmental impact will be lessened if the electricity it all runs on is derived from renewable low carbon sources. So let’s hope that wind and solar power keeps on getting cheaper.

An expansion of machine learning could also help with balancing out the variable supply of renewable power. Because of physics, there’s a limit to how far electricity can be moved from where it’s generated to where it’s needed. So the power needed to light up a city, charge an electric car or run a factory, needs to come from a reasonably local source (at most, hundreds of miles away – not thousands).

These constraints, however, don’t apply to data. If you need some heavy-duty processing done, then where in the world it physically happens is not a primary consideration. The demand for processing (and the associated energy use) can originate in one place and be supplied from another. As long as we have flexible ‘cloud’ computing systems that can match supply and demand just about anywhere on the planet, then we should be able to exploit surpluses (and compensate for deficiencies) of renewable energy wherever they occur.

However, we also need big leaps in the efficiency of machine learning technology – i.e. it must be able to achieve more with less processing capacity. This isn’t just for environmental reasons.

Karen Hao quotes Emma Strubell – lead author of the University of Massachusetts, Amherst research:

“The results underscore another growing problem in AI, too: the sheer intensity of resources now required to produce paper-worthy results has made it increasingly challenging for people working in academia to continue contributing to research.

“‘This trend toward training huge models on tons of data is not feasible for academics—grad students especially, because we don’t have the computational resources,’ says Strubell. ‘So there’s an issue of equitable access between researchers in academia versus researchers in industry.’”

This is an important point. Unless the resource efficiency of machine learning improves, then its rate of progress will be impeded and the direction of its development skewed to the objectives of those who can most afford the computer time and the electricity bills.

A technology that will remake the global economy and that could be a great leveller, would instead entrench existing inequalities.

Join the discussion

Join like minded readers that support our journalism by becoming a paid subscriber

To join the discussion in the comments, become a paid subscriber.

Join like minded readers that support our journalism, read unlimited articles and enjoy other subscriber-only benefits.

Subscribe