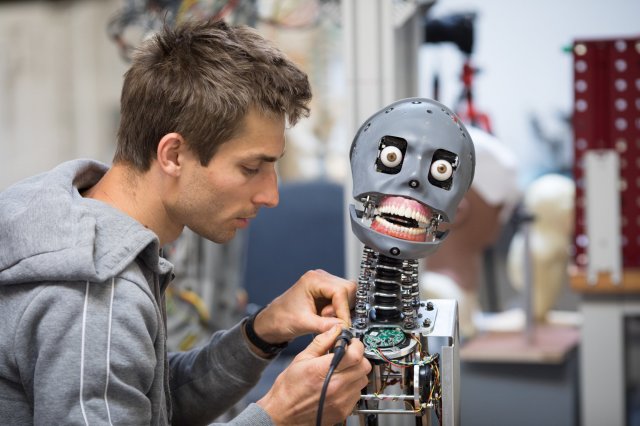

Could you bring yourself to switch a robot off? Credit: Matt Cardy / Getty

In his 1977 triumph, writer Chris Boucher defined Robophobia as a “pathological fear of robots brought about by their lack of body language”. Forty-one years ago – four decades before we became fashionably alarmed about the impact of AI on our lives – Boucher imagined a world utterly dependent on robots; a humanoid slave race, living ‘among’ us, but not ‘of’ us.

In Boucher’s story, murders are taking place on a mining craft — a Christie-esque setting, claustrophobic, closed-off, so the murderer must be among the assembled and isolated cast, who are being bumped off, one by one. One character, Poul, forced to confront the hitherto literally unimaginable concept — that the for-granted robot crew, which numerically dwarfs the humans on board by orders of magnitude, may contain the culprit — has a complete nervous breakdown.

He suddenly sees the robots, not as an indivisible, near-invisible mass of hidden-in- plain-sight workers, but as… people. Almost-people: Boucher’s robots are humanoid, stylised, calm: they look like ‘us’, but they are (clearly) also not-us. It’s therefore narratively convincing when Poul begins to scream about “the walking dead”, and collapses under the weight of his dread.

That this 1977 masterpiece was for an episode in a Tom Baker season of ‘Doctor Who’ partly explains the programme’s longevity. Every so often it produces drama that qualifies as art: science fiction, on the surface about some silly made-up society a billion light years from Earth and impossibly far in the future, but which yet compels the viewer to re-examine things we take for granted: what does it take to be human? What are the minimal requirements for entry?

Before you scoff at my childhood fondness for a science fiction murder story with ‘impossible’ robots and an invented phobia about them, consider this highly contemporary and entirely non-fictional scientific investigation, by Horstmann and co-workers, into what humans do when confronted with something that, while clearly un-human, exhibits human-style behaviour.

In the experiment, participants were randomised to a set of tasks with an obviously toy robot under a number of different conditions (sometimes the robot was chatty, sometimes formal, that sort of thing). After a lot of deceptive faffing, the participants were asked to switch the machine off. Sometimes the robot would “object” to this (“No! Please do not switch me off! I am scared that it will not brighten up again!”), sometimes it wouldn’t utter a damn thing.

Surprisingly — unless you watched the Robots of Death as a child, when ‘obviously’ would be a fitter adverb — surprisingly/obviously, participants were less likely to switch off the robot if it first objected. It’s enough for a machine to resemble a human being, in cartoon-like fashion, making no pretence at synth-like behaviour beyond having a metal head, a basic face and an electronic voice, for human beings to project human need onto its smoothly impersonal surface. The walking dead they may be: but they’re sufficiently like us to elicit an emotional response. I wonder how the participants felt, the ones who switched off the machine even as it babbled about the dying of the light? A Poul-like sense of dread, would be my bet.

If a robot tells me its feelings are hurt, then I respond accordingly (“Alexa! Do you mind if I switch you off, and talk to Siri instead?”). I anthropomorphise, and ‘see’ the object as sufficiently human to warrant compassion (or terror, once they outnumber us). It’s the act of seeing which imbues an artificial construct with an agency that its base material doesn’t warrant. This must be happening at a limbic level: ironically, conscious awareness of a machine’s lack of consciousness cannot prevent the attribution of consciousness onto its creepy mechanical face (in The Robots of Death, the doctor’s companion calls robots “creepy mechanical men”).

Anyone who’s ever felt a spasm of pity at the ‘plight’ of a stone, picked from a beach, examined carefully, considered for a home decoration but then tossed aside, will understand this, I think. It’s recognition that, however loudly one’s ego insists on one’s uniquely important attributes, from a very small distance one is almost completely non-differentiable from the rest of the human race. We’re all just biological machines, neuronically and neurotically fizzing away, loudly insisting that ‘I’ am so very, very different from ‘you’. While I might be just one pebble among billions, yet I’m still somehow special. And not ‘you’. You are like-me but not-me.

The miracle of humanity isn’t that we’re alive, move around, copulate and die: the miracle is that we can perceive the tiny differences between ourselves at all, when we’re biologically and aesthetically and psychologically so entirely similar: I expect when the Martian finally arrives it’ll struggle to tell us apart.

It’s in those tiny differences that love must exist: else why have the emotion at all? (Why choose one rock over another?) All that laser-like energy required in order to see the other: it has to go somewhere; it’s the most powerful force in existence. And thus the problem we’re going to have with robots, the ones that are like-us but not-us: we won’t be able to choose how we see them either.

In Dan Mangan’s 2010 song Robots, a spurned lover likens himself and his former bed-partner to automata (“Your robot heart is bleeding out” — more shades of Poul’s hysteria). Indeed, the singer seems to suggest that being “robot boy” is the best defence against pain. But this doesn’t work, and “that little voice in the back of your mind” eventually admits that “Robots need love too. They want to be loved by you. They want to be loved by you.”

The song makes me cry, every time I hear it. Like I’m pre-programmed – like I have no conscious choice in the matter. The fear of lovelessness isn’t “a flaw in the design”, as Mr Mangan wonders, it’s part of being human. I’m so worried that the light won’t come back. Robotically — without volition — I make myself ready for love.

And since we’re building this new breed of intelligent robots in our own image, what do you expect they’ll sing when we switch them on, and how do you think you’ll react to their song? The robotic laws of life, of ‘seeing’, won’t care whether the object of your gaze is fashioned from flesh, or metal. We’re human, which means, I’m afraid, that we’ll want to be loved by them. Just like the robots we are.

Join the discussion

Join like minded readers that support our journalism by becoming a paid subscriber

To join the discussion in the comments, become a paid subscriber.

Join like minded readers that support our journalism, read unlimited articles and enjoy other subscriber-only benefits.

Subscribe