There’s a fascinating and slightly unnerving new study out in preprint, by scientists at the Eindhoven University of Technology.

Here’s the technical version. There’s a system called “Registered Reports”, in which scientists preregister their hypotheses before carrying out a study, and scientific journals agree to publish the study on the strength of the methods, rather than the results. The new study found that Registered Reports are only about 50% as likely as standard, non-RR research to confirm their hypothesis.

And here’s why it matters. At the moment, science has some profound problems. Journals tend to only publish “novel”, “exciting” results. That means that if you do an experiment to see if wine gums cause halitosis, and it comes back negative, it probably won’t get published. As I said recently, that means that journals fill up with “positive” studies and “negative” ones sit in file drawers, so the scientific literature is skewed.

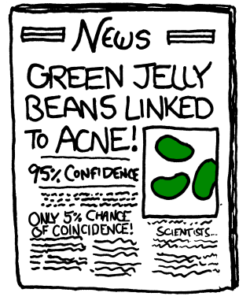

Worse: scientists live in a “publish or perish” ecosystem. If you don’t get studies published in journals, you don’t get promoted. THAT means that if your study comes back negative, you’re incentivised to come up with new hypotheses that fit interesting-looking patterns in the data: “hypothesising after results are known”, HARKing. Again as I’ve written before, in one field, video game research, in 130 papers that gather data in the same way, that data gets chopped up in 157 ways. If it’s not obvious why that could lead to spurious results, read this XKCD comic.

Registered Reports are designed to avoid both these problems. By signing up to them, journals avoid the file-drawer problem: they promise to publish the research, whatever it finds, as long as it follows its methods. And scientists can’t go back and HARK because they’ve preregistered their hypotheses – but they’re not incentivised to anyway, because the study will be published whatever they find. It really is an elegant solution to a lot of science’s problems.

What the new study found was that more than 90% of psychological studies published under the standard regime confirmed their original hypotheses; but less than 50% of RR did. Taken at face value, that means that nearly 50% of non-RR results are simply wrong. RRs reveal just how much hoop-jumping goes on in science.

It’s a little more complicated than that (although one author suspects that it’s “more complicated” in that even RRs can’t eradicate all bias and are still overstating the results). But this is probably a good ballpark measure. So if you’re reading a study, and it doesn’t mention “Registered Reports” or at least “preregistration”, then you should be roughly 50% less likely to trust its results; and if you’re reading a news report about a scientific study, and it doesn’t tell you whether that report is preregistered or not, you should do the same. And complain to the author on Twitter while you’re at it.

Join the discussion

Join like minded readers that support our journalism by becoming a paid subscriber

To join the discussion in the comments, become a paid subscriber.

Join like minded readers that support our journalism, read unlimited articles and enjoy other subscriber-only benefits.

Subscribe