'These systems have the potential to become powerful enough, even without consciousness, to control the world.' (Wiktor Szymanowicz/Future Publishing/Getty)

On the first day of September, Guido Reichstadter made his way into San Francisco’s SoMa neighborhood, and positioned himself near an entrance at Anthropic’s headquarters. Beneath his silver-framed glasses, the 45-year-old activist wore an expression of calm concern. Alone in the shadow of the 10-story office building, he seemed almost frail. He brought a folding chair along with him. Beside it, he set up an A-frame chalkboard that read in big block letters: “Hunger Strike Day 1, Anthropic: Stop the Race to General Intelligence!”

In a post on X, Reichstadter explained: “I am calling on Anthropic’s management, directors and employees to immediately stop their reckless actions which are harming our society and to work to remediate the harm that has already been done.” Days later, filmmaker and former AI researcher Michaël Trazzi launched his own campaign, inspired by Reichstadter’s, outside Google DeepMind’s London headquarters.

The cross-continental demonstration took hold, earning press coverage in Business Insider, Futurism, and The Verge, among others, even if Anthropic and DeepMind themselves did not respond. After 30 days of consuming only vitamins and electrolytes, Reichstadter ended his participation in the protest. “We who have everything at stake,” he wrote, “cannot stand by while this malignant conspiracy against the safety and security of the human race flourishes under the aid and protection of the corrupt and inept governments of this world.”

***

In the months after the hunger strike, I connected with Reichstadter through some mutual acquaintances on X. I was told that he was part of a larger anti-AI social movement cropping up in San Francisco, and that he had helped found an organization called Stop AI. The city is at the center of the AI boom — and corresponding safety movement — but it’s also the beating heart of American counterculture. I wondered what this burgeoning social resistance might look like, so ask Reichstadter to meet.

He agrees, and we make plans to go hiking in the Berkeley Hills. Given Reichstadter’s past, the natural setting feels appropriate. With a background in climate and other issue-driven activism, in April 2022 he went on a two-week hunger strike outside the offices of Miami-Dade County Mayor Daniella Levine Cava to bring attention to climate change. In June that same year, the father of two spent 28 hours atop the Frederick Douglass Memorial Bridge in Washington, D.C. to protest the Supreme Court decision overturning Roe v. Wade. “Civil resistance works,” he said in an Instagram video facing out over the Anacostia River. “Learn about it… That’s how we’re going to get the radical change we need in the shortest time necessary.”

Out on the trail, Reichstadter walks me through his journey into anti-AI activism. He says he was still in college when it first occurred to him that super-powerful AI could cause serious human harm. With the launch of OpenAI’s large language model ChatGPT in 2022, his fears became more concrete. Now, as the technology continues to advance at breakneck speed, Reichstadter’s efforts have become increasingly urgent. “These systems,” he tells me, “have the potential to become powerful enough, even without consciousness, to control the world.”

Reichstadter first came to San Francisco in 2024, after meeting a guy named Sam Kirchner through a group called Pause AI. The pair apparently bonded over the idea of using civil resistance and direct action, which Pause AI does not allow, to bring attention to the risks surrounding the development of super-intelligent AI. That led to a new group: Stop AI. Reichstadter is no longer connected with it — he left last August amid internal disputes — but still maintains ties: including legal ones. He’s awaiting trial with another Stop AI member for charges related to non-violent protests last February at Open AI’s headquarters. Lately, Reichstadter has been acting independently and recruiting more people for the cause. “This is not necessarily about reaching everybody,” he says. “It’s about finding a viable coalition of individuals who are willing to push back.”

***

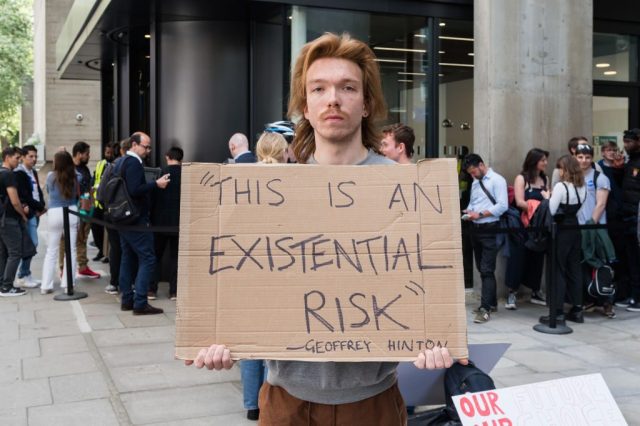

The idea that superintelligent AI might lead to catastrophic harm or human extinction, once the domain of theorists and philosophers, has gained momentum among some experts. The discourse surrounding the extinction threat — or “x-risk” as it’s known in some corners of the internet — became especially intense following the release of ChatGPT. More than 1,000 tech leaders and experts, including Elon Musk, signed a March 2023 letter calling for tech companies to pause further AI development. In May the same year, Nobel laureate and pioneering “AI godfather” Geoffrey Hinton dramatically left his job at Google to warn about the dangers associated with the rapid development of artificial intelligence. Weeks later, CEOs from leading AI labs, including Anthropic, DeepMind, and OpenAI, acknowledged that the extinction risk from AI is on par with that of pandemics or nuclear war.

Experts disagree about what the actual risk might look like, and how likely any given scenario might be. One argument suggests that superintelligent AI systems pose a risk to human beings if their goals are not precisely aligned with human values. The idea is demonstrated by Nick Bostrom’s “paperclip maximizer” thought experiment, in which a powerful AGI is given the directive to maximize the number of paperclips in existence: even if that means eliminating human beings in the process. Others worry that bad actors could misuse AI to cause more conventional harms, from global war and disease to economic and ecological collapse.

Even so, big tech firms have continued full speed ahead toward increasingly powerful AI systems, with critics ever-more worried in turn. Stuart Russell, a professor of Computer Science at UC Berkeley and one of the world’s leading researchers in artificial intelligence, has likened the situation to an “arms race”. “For governments to allow private entities to essentially play Russian roulette with every human being on earth is, in my view, a total dereliction of duty,” he told AFP during last week’s India AI Impact Summit. The warning comes as researchers at some of the world’s biggest AI firms have also tendered their resignations. As Mrinank Sharma, who led Anthropic’s safeguards research team, wrote in an open letter to his colleagues: “The world is in peril. And not just from AI, or bioweapons, but from a whole series of interconnected crises unfolding in this very moment.”

***

In some ways, Stop AI is an effort to harness those anxieties into collective action. It’s an approach that’s been used by radical environmentalist groups in the UK, notably Extinction Rebellion and Just Stop Oil, to bring about systemic change. Traditionally, those within the AI safety movement have taken the insider approach, working with labs and research centers and think tanks to influence safety procedures and government policy. That, along with concerns about being taken seriously, have encouraged caution when it comes to practical campaigning. Stop AI, however, explicitly favors civil disobedience. “History has shown us that non-violent movements can meet their goals quickly when a large enough group of people cooperate towards a common goal,” reads the group’s website. “Non-violent resistance is the only answer.”

As its name would suggest, Stop AI’s end goal is a permanent and binding international ban on the development of both Artificial General Intelligence (AGI) and Artificial Super Intelligence (ASI): respectively AI as capable as human beings at most cognitive tasks, and AI that surpasses the abilities of human beings. In its pursuit, the group has never shied away from controversy, adopting tactics like blocking entrances to office buildings or chaining their doors shut.

Last November, they made national headlines when a public investigator hopped on stage to serve a subpoena on OpenAI CEO Sam Altman during an event with Golden State Warriors coach Steve Kerr. On social media, Stop AI claimed responsibility for the stunt. “Our public defender successfully subpoenaed Sam Altman to appear at our trial where we will be tried for non-violently blocking the front door of OpenAI on multiple occasions,” the group said in an X post. “This trial will be the first time in human history where a jury of normal people are asked about the extinction threat that AI poses to humanity.”

In the past, the group’s messaging has been direct, if not apocalyptic. There was a banner, for instance, that read “AI Will Kill Us All.” Then there was the accompanying chant: “Stop AI or We’re All Gonna Die.” In recent months, though, as Stop AI’s organizers have wrestled with how to more effectively raise awareness and grow their ranks, that message has evolved. With the election last fall of new leader Matthew Hall, who goes by the name Yakko, Stop AI began moving from focusing solely on the risk of human extinction. “I feel a moral obligation to warn people,” Yakko told The Daily Californian. “I just think when people get too scared they just kind of shut down. They still have to go about their daily business.”

What happened next hints at the broader tensions within the anti-AI movement. Kirchner, apparently frustrated by what he saw as a departure from Stop AI’s more radical message, stormed out of a November planning meeting. Later, he came back and asked for access to the organization’s funds. Agitated that his request was denied, he struck Yakko several times in the head. According to Yakko, Kirchner told him that “the non-violence ship has sailed for me”. He later apologized, but the incident led to his expulsion from Stop AI. Since then, though, Kirchner has vanished, not turning up for a scheduled court date. His bike and camping gear have disappeared from his house too. Out of an abundance of caution, a member of Stop AI called the police to say that Kirchner could potentially pose a threat to people working at AI labs. That led to lockdowns at OpenAI, though members of Stop AI maintain they are not aware of any specific threats.

Either way, StopAI was quick to post a message on X detailing the situation, reaffirming its commitment to non-violence. Pause AI equally condemned Kirchner’s actions, explicitly stressing that it “has no affiliation with him or his organization”. For its part, Pause AI’s Code of Conduct explicitly prohibits violence, while also requiring compliance with any applicable laws. Pause AI CEO Maxime Fournes adds that the movement has always been “extremely peaceful”. That’s echoed by a broader debate about the perils of a certain kind of apocalyptic anti-AI messaging. “An early activist at Stop AI had a mental health crisis and went missing,” Remmelt Ellen, an advisor to Stop AI, warned in an X post. “Act with care. Find Sam. Stop with the ‘AGI may kill us all by 2027’ shit please.”

It’s impossible now to know what Kirchner might have been thinking, what pressures he may have been under. Yet three months after his initial disappearance, the 27-year-old activist is still missing. According to Kirchner’s friends and allies, he had been under a lot of stress, and the thought that AI might cause harm to his sister was consuming him. “He had the weight of the world on his shoulders,” one of the group’s members told reporters.

Lately, Stop AI has been engaged in the delicate task of sorting out how to continue its work in spite of everything that’s happened — and despite the fact that Kirchner has still not been found. For its last public event of 2025, Stop AI hosted what it called “A Vigil for Humanity”. Tellingly, perhaps, it wasn’t held at OpenAI or Anthropic, but rather at San Francisco City Hall.

The tone was decidedly somber. A video posted online shows members holding lanterns in the waning daylight. Someone in the group explains who Stop AI is and what they’re doing there. There are warnings about the extinction risk. But then the conversation turns toward the broader value of human life. “We are going to take a moment to meditate and pray that every human life is protected, including the lives of the people who work at these companies… even though we deeply disagree with what they’re choosing to dedicate their lives to… even though they’re pursuing something that is dangerous. We think their lives are sacred and important too.”

***

While in San Francisco, I planned to go to a Stop AI protest at OpenAI’s headquarters, but then learned that the event had been canceled. I considered going by the offices anyway, just to get a sense of the place. Instead, I connect with Wynd Kaufmyn, who is one of the group’s organizers. We meet at a restaurant near the Fruitvale BART station; she’s planning to attend a nearby protest against the Trump’s administration’s immigration policy.

Now in her sixties, Kaufmyn came to the Bay Area as a Berkeley graduate student — drawn in by its radical politics and the lingering energy of the Free Speech Movement — and never left. She met Reichstadter and Kirchner at a protest in support of Palestine at Andrews Airforce Base. The pair shared some information about Stop AI, and Kaufmyn started going to meetings. Now, more than a year later, she’s a member of the group’s leadership council. “The reason that we’re having this AI problem right now is that we have corporations running the world,” she tells me. “And they don’t care what happens to people. So, for me, this is really about being anti-establishment, being anti-fascist.”

Stop AI, Kaufmyn explains, has been talking a lot more lately about AI skepticism as a big-tent issue, encompassing concerns about more immediate risks — like autonomous weaponry, surveillance, mass resource use, and job loss — alongside existential risk. “We want to be honest about what’s at stake here,” she says. “But we don’t want the message to be all doom.”

Certainly, Kaufmyn worries that anxiety about impending catastrophe is what led to Kirchner’s disappearance. “I could tell he was really depressed,” she says. “And I wanted to help him, but he was always very guarded.” She echoes the observation that he had become increasingly frantic that AI might hurt or kill his sister. “There was an article in the San Francisco Standard that I thought put it well,” Kaufmyn continues. “Is it possible to protest the end of the world without losing your mind?”

In recent months, Stop AI has been in a regrouping phase — trying to understand what happened and how to keep it from ever happening again. Kaufmyn explains they’ve been working on strengthening the organization from the inside out, building a strong internal culture so that they can reach more people outside of the movement. They’re being mentored, she tells me, by some members of Extinction Rebellion. The group is known for practicing what it calls “regenerative culture”, which tries to create healthy relationships through mutual care. But Extinction Rebellion is also among those in the environmental space most willing to aggressively engage on the AI issue.

Last summer, two Extinction Rebellion activists protested Meta’s AI expansion by chalk-spraying the windows at its New York offices with messages like “Meta Makes Kings” and “Zuck Loves Trump”. Earlier this month, Reichstadter shared an X post from Extinction Rebellion Founder Roger Hallam, announcing the Zoom launch of a group called “Pull the Plug” in the UK. “Give ordinary people a say in how AI is used in our lives,” its website says. Later this month, the group is hosting what’s been billed as the UK’s “first-ever anti-AI march” at OpenAI’s London offices. It’s called the “March Against the Machines”.

Before I leave San Francisco, I tag along with Kaufmyn for part of the immigration policy protest. It’s crowded in Fruitvale Commons. Some of the activists shout over a megaphone. Others hold a huge white sign with red lettering that says: “People Over Billionaires.” Kaufmyn surveys the banner and reads the words out loud. “People over billionaires… I like that.” For a moment, we stand in silence. “I have one last question,” I tell her. “What do you think your goal is for Stop AI?” She shifts her weight a little. “I would like everybody from every walk of life to stand up to these billionaires — through civil disobedience, a hunger strike, however — and demand that they aren’t building harmful technologies that could destroy our lives when we are telling them not to.” A different battle in the same fight within the AI-skeptic movement, and one Kaufmyn and others have fought many times before.

Join the discussion

Join like minded readers that support our journalism by becoming a paid subscriber

To join the discussion in the comments, become a paid subscriber.

Join like minded readers that support our journalism, read unlimited articles and enjoy other subscriber-only benefits.

Subscribe